As of August 2022, 69.99% of all online retail orders—e.g., shopping carts—were abandoned instead of purchased. That’s according to cumulative data gathered by independent research firm Baymard Institute.

The pain of shopping cart abandonment affects both small and large ecommerce businesses alike. But it’s only at scale that the pain truly intensifies.

Let’s say your ecommerce site brings in 125,000 visitors per month, your average order value is $100, and your visitor-to-sale conversion rate is 0.92%.

If you increased your conversion rate by a mere 0.5%, you’d add an extra $62,500 of revenue every month. Cumulatively, that’s an extra $690,000 every year.

Numbers like that are exactly why conversion-rate optimization is big business. The question is: Where should you start?

What is Shopify cart abandonment?

Shopify cart abandonment is when a customer adds items to their online shopping cart on a Shopify store, but then fails to complete the purchase. Shopping cart abandonment is common due to difficult checkout processes, high shipping costs, and wishful thinking.

What’s the average cart abandonment rate?

The average cart abandonment rate is just under 70%. Research from Statista also found that when UK shoppers abandon carts, less than a third return to buy the items they left. Yet, a quarter of them buy the same product from a competitor.

Why do people abandon carts in the first place? The latest Baymard Institutes research found the following common reasons:

Extra costs too high (shipping, tax, fees) (48%)

The site wanted me to create an account (24%)

Delivery was too slow (22%)

I didn’t trust the site with my credit card information (18%)

Too long/complicated checkout process (17%)

I couldn’t see/calculate total order cost up-front (16%)

Website had errors/crashed (13%)

Returns policy wasn’t satisfactory (12%)

There weren’t enough payment methods (9%)

The credit card was declined (4%)

Baymard Institute estimates that $260 billion of lost orders are recoverable in the US and EU solely through better checkout flow and design. If you’re running a Shopify store, let’s look at ways to reduce cart abandonment and recover lost sales.

11 ways to reduce shopping cart abandonment on Shopify

Send abandoned cart emails

Start a rewards program

Use live chat

Offer many payment options

Simplify checkout

Use exit intent pop-ups

Run retargeting ads

Build trust during checkout

Use social proof

Make returns easy

Send push notifications

1. Send abandoned cart emails

Most ecommerce marketers dread cart abandonment. But here’s the fun part: cart recovery actually makes you money. Data from Klaviyo found that customers in their data set generated more than $60 million in sales from cart abandonment email campaigns over a three-month window.

Cart recovery emails also have the best performance across any email marketing strategy. Overall performance metrics for abandoned cart emails in Klaviyo’s data set included:

Open rate: 41.18%

Click rate: 9.50%

Revenue per recipient: $5.81

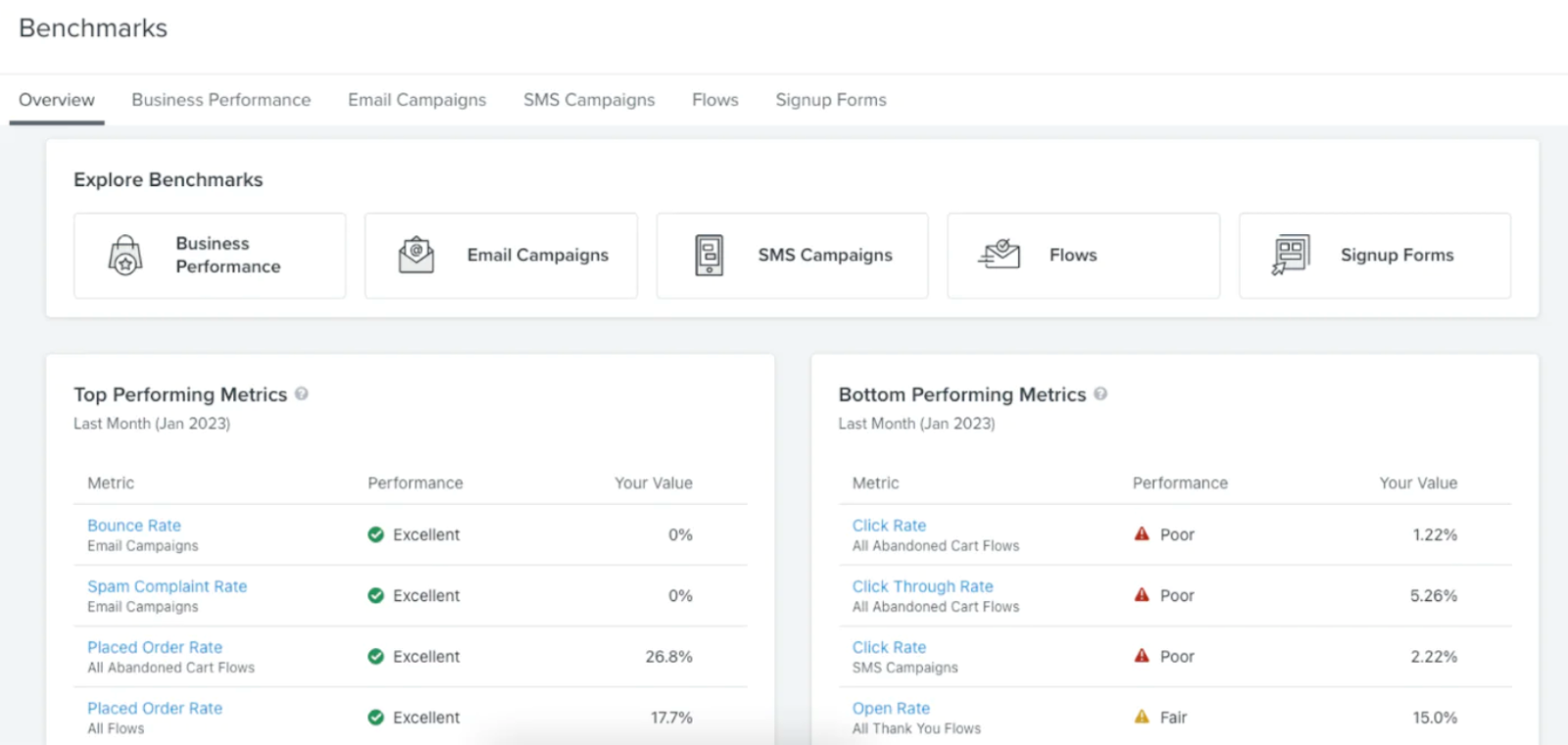

If you want to leverage email and SMS abandoned cart recovery, add the Klaviyo app to your store. Klaviyo is an official Shopify strategic partner. Brands like Glossier, Osea, and Loeffler Randal use Klaviyo’s powerful automation features to create profitable email marketing campaigns.

You can also sync Klaviyo to all your Shopify data, such as website activity, tags, catalog, and coupons, and benchmark your business performance against other brands like yours.

2. Start a rewards program

Rewards programs turn regular customers into high spenders. A 2021 Harvard Business Review (HBR) study found that targeted loyalty program promotions increased purchase likelihood by 6.1%.

HBR found that rewards programs can lead to customers buying more products from you versus from a competitor store. It also found that loyal customers bought more expensive, premium versions of the same products they had previously bought from a retailer.

For these two customer types, the loyalty program increased spending by roughly 50% during the two-year study.

Fortunately, you can start a reward program easily today with two Shopify apps:

Smile, which powers loyalty, referrals, and VIP rewards programs. Creating a program takes minutes, and you’re supported by a 24/7 team of experts.

LoyaltyLion, which helps you create an advocate community with referrals, reviews, and other actions.

These apps assist you in encouraging repeat purchases by letting online shoppers earn and redeem points. You can customize your program to match your brand identity and integrate with other partners, like Klaviyo.

3. Use live chat

Live chat is another way to reduce Shopify cart abandonment. You can use live chat to help customers with orders and provide customer support. For example, an agent answers questions customers have about the product, so they’re less likely to abandon it.

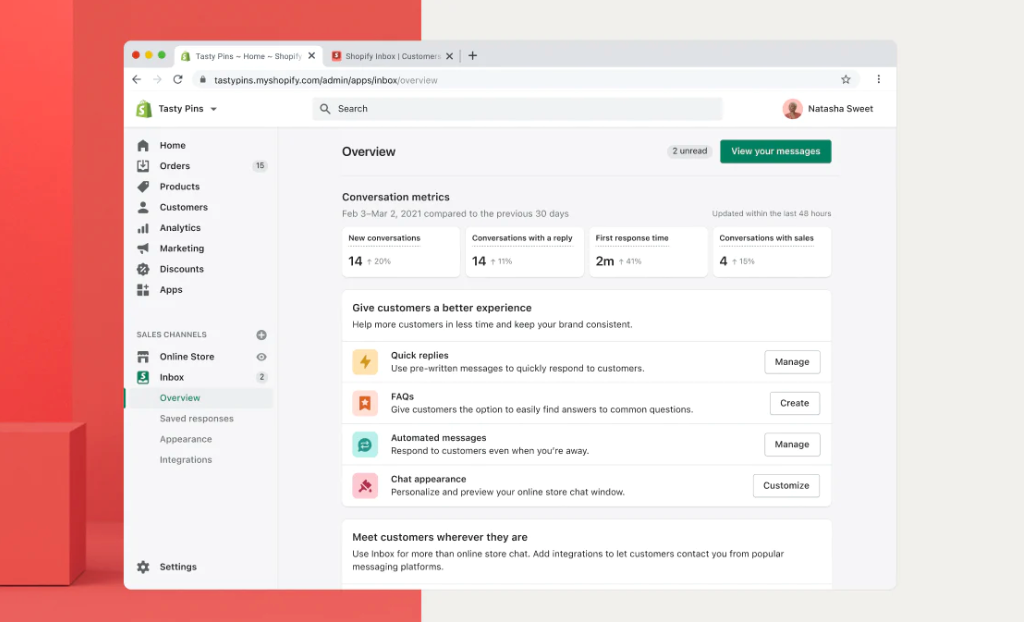

Shopify Inbox is a free messaging tool that lets you chat with customers while they shop. You can manage conversations from online store chat, Shop app, Instagram, and Messenger. In fact, 70% of all Shopify Inbox conversations are with shoppers making a purchase.

While you chat, you can use real-time customer data like products viewed, what’s in their cart, and past orders to tailor each message. You can even recommend products and give discounts without leaving the chat.

4. Offer many payment options

One of the top reasons shoppers abandon carts is because there aren’t enough payment methods. By providing multiple payment options, you can increase the chances of customers completing their purchase.

Notice how Culture Kings offers various Express checkout options in its cart. Shoppers can choose between Shop Pay, PayPal, Google Pay, or Meta Pay, or pay with a credit or debit card.

When choosing a payment provider, consider the countries where your business is located and where your customers live. Shopify’s list of payment gateways by country will help you learn which ones are available in your target country and the types of currencies they support.

With Shopify Payments, you can skip lengthy third-party activations and set up payment methods in one click. You can offer a number of express checkout options or even additional methods of payment like cryptocurrency.

5. Simplify checkout

Quick and easy checkout will lower your cart abandonment rate and recover lost sales. In fact, two in three consumers expect to check out in four minutes or less, according to the latest data.

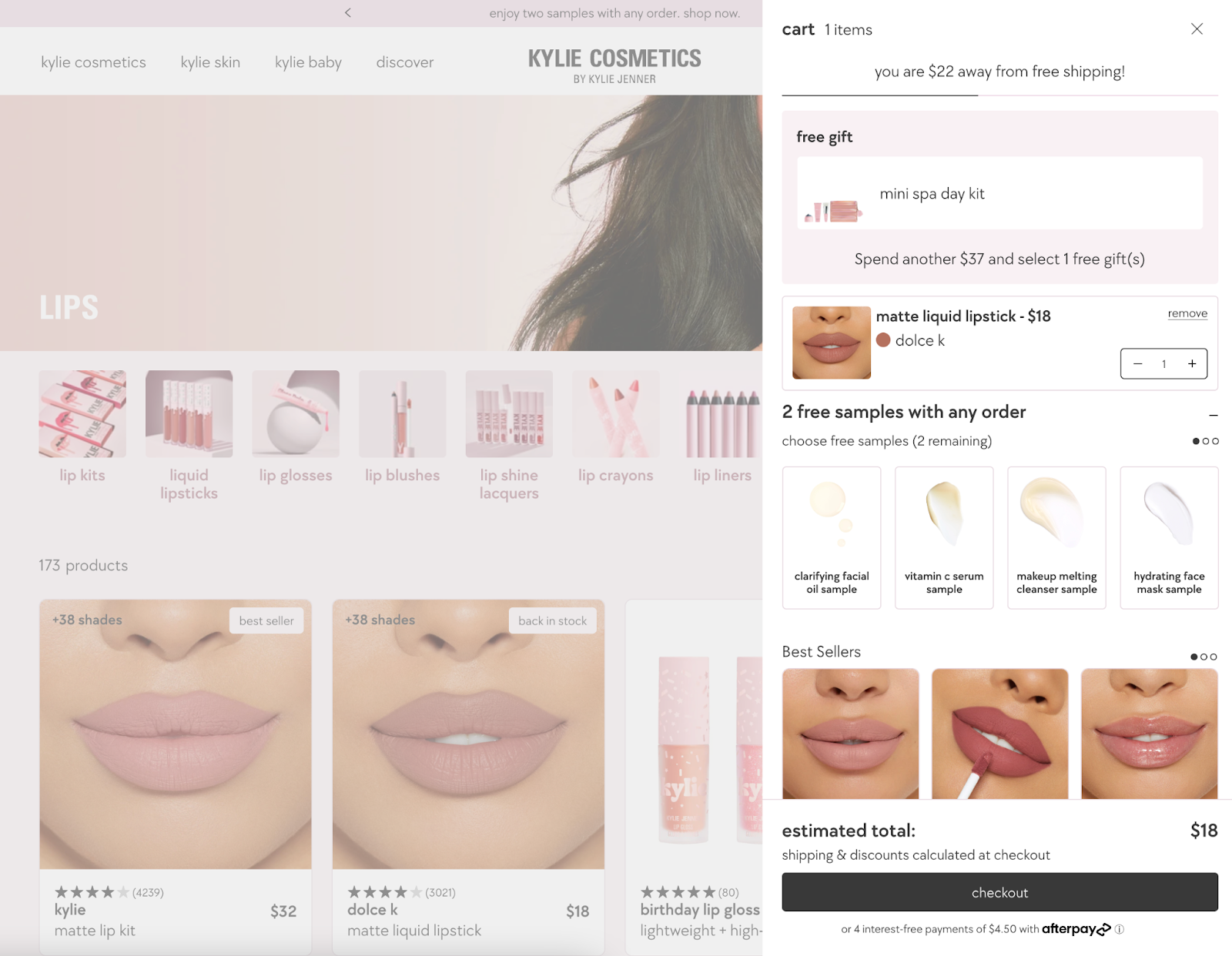

Set up a side cart to make accessing the shopping cart easy. You can see how Kylie Cosmetics uses a side cart that lets browsers see its products, choose free samples, and browse bestsellers.

After clicking through to the checkout page, shoppers can choose the Shop Pay option and quickly pay for their purchase. All their information is saved, so checkout takes seconds.

To activate Shop Pay,

From your Shopify admin, go to Settings > Payments.

In the Shopify Payments section, click Manage.

In the Shop Pay section, check Shop Pay.

Click Save.

You may also want to add a guest checkout option for your store. Findings from Capterra’s 2022 Online Shopping Survey show that some 43% of consumers prefer guest checkout.

6. Use exit intent pop-ups

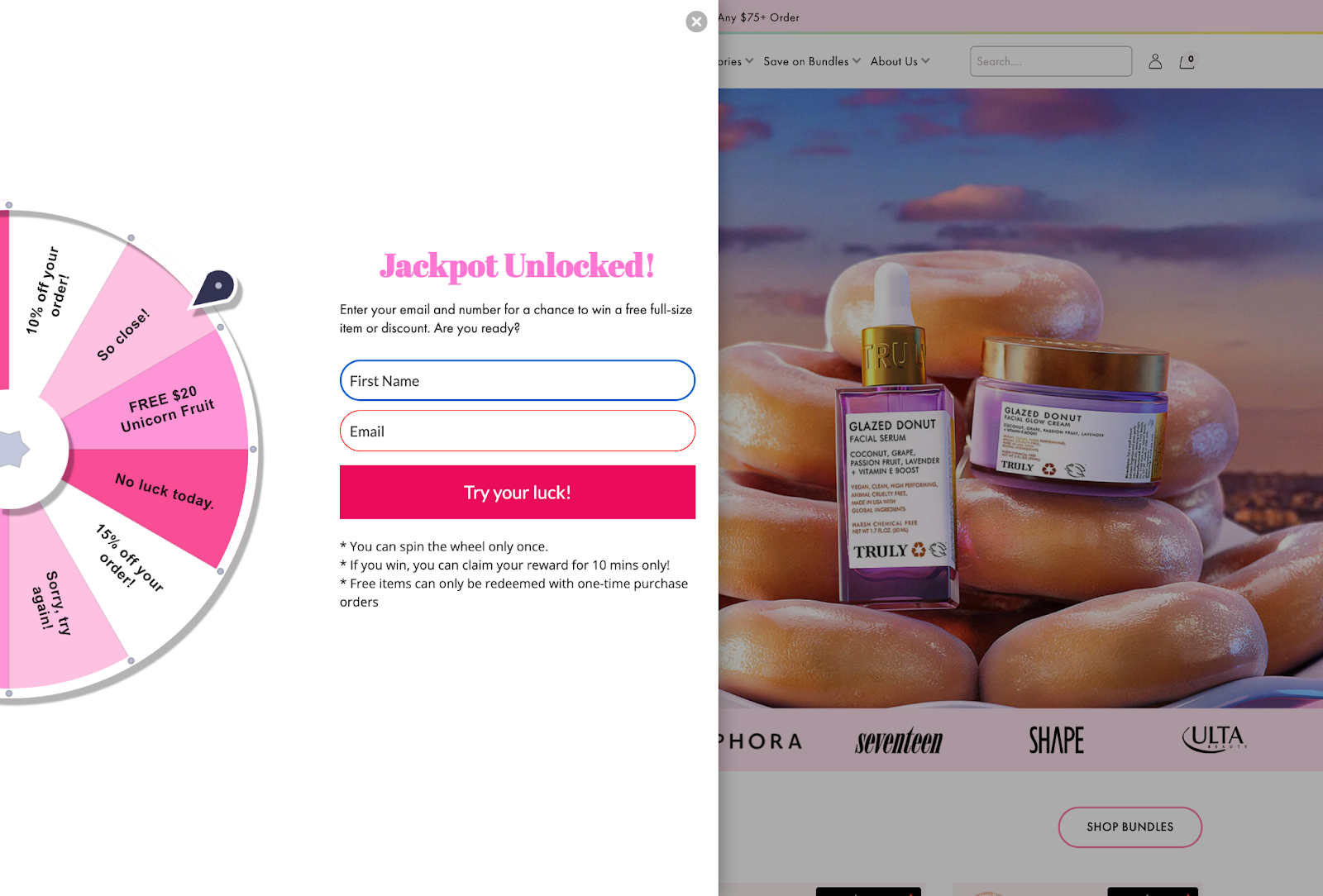

Exit popups entice website visitors and potential customers to take action before leaving your site. Using these pop-ups, you can offer a discount code or free shipping to customers who are about to abandon their cart.

According to data from OptiMonk, cart abandonment pop-ups have an average conversion rate of 17.12%.

Beauty brand Truly uses Privy to prompt a spin-to-win pop-up before leaving its site. You can win a free item or discount just by spinning the wheel, which increases the likelihood of conversion. It’s also a fun and easy user experience that rewards potential customers.

With a Shopify app like Privy, you can track a site visitor’s movements on a page and prompt an event (like a pop-up or spin to win) when it detects the visitor is about to leave. It also offers templates to create pop-ups fast, automated A/B testing to see which pop-ups perform best, and tools to grow your email list.

You can also pull products directly into your Privy account, sync your coupon offerings to your campaigns, and more.

7. Run retargeting ads

Most people won’t buy the first time they are on your site.

Ecommerce stores on average convert only about 5.2% of traffic, according to data from Unbounce. Retargeting ads are shown to people who’ve left something behind in their cart, and entice them to come back and buy the products.

Cart abandoners are a great group to retarget because they are far along the conversion funnel. Sometimes they only need an incentive, like a discount or free shipping.

“We want to get them [cart abandoners] back. We don’t just want to get them back to any old product page. We want to show them the actual products that were in their shopping cart when they abandoned. We want to retarget them with the products that they actually have added to the cart.”

Ezra Firestone, CEO of BOOM! By Cindy Joseph

Here are a few apps to run and optimize retargeting ads across Google and other social media sites.

8. Build trust during checkout

Trust messages aren’t limited to security logos and privacy policies near the “Complete order” button. In some cases, it means bringing a little extra clarity where you’re asking the visitor to make a decision.

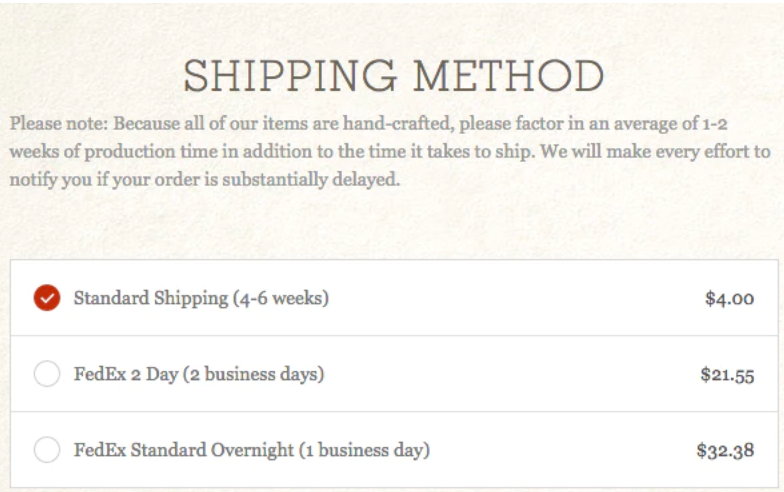

Take this Shipping Method section for example:

Looking at this, you have no idea how long it will take to receive the order. “Standard shipping” doesn’t estimate how long it could take, and the copy above practically prompts you to abandon the checkout to see how long it will take to receive the product.

However, by altering the copy to add just a little more clarity in the Shipping Method section, the visitor has all of the information necessary at the point of decision and therefore can trust themselves to make the right choice given their circumstances.

In addition, most third-party logistics (3PL) providers—particularly those that integrate directly with Shopify Plus—can automate estimated delivery dates.

9. Use social proof

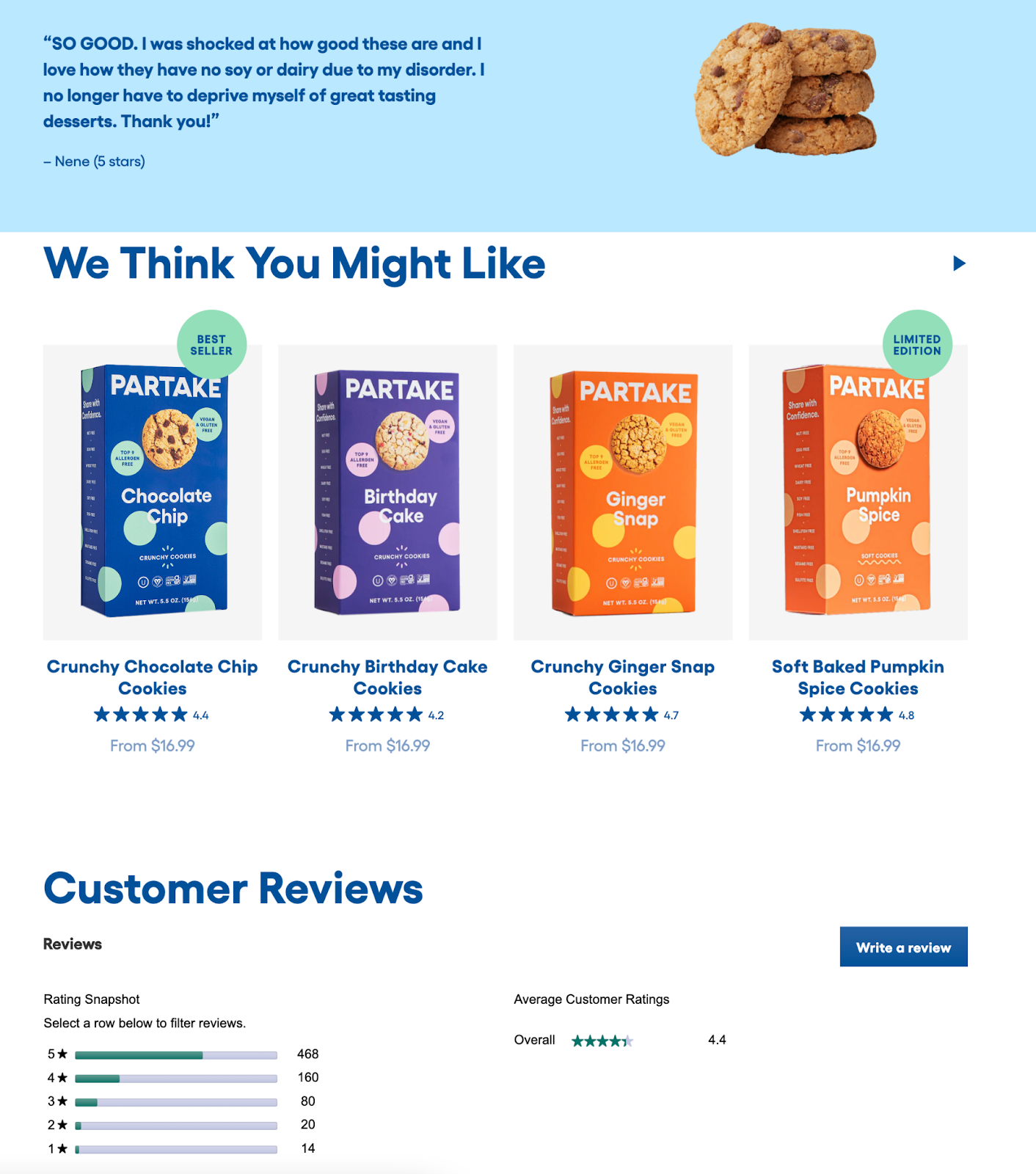

Another way to build trust is through social proof on your product pages. You can use customer testimonials to show your products do really work and are worth the shopper’s money.

Partake Foods, for example, highlights specific testimonials from customers and a feed of reviews for shoppers to browse.

There are many Shopify apps to collect and display reviews, including:

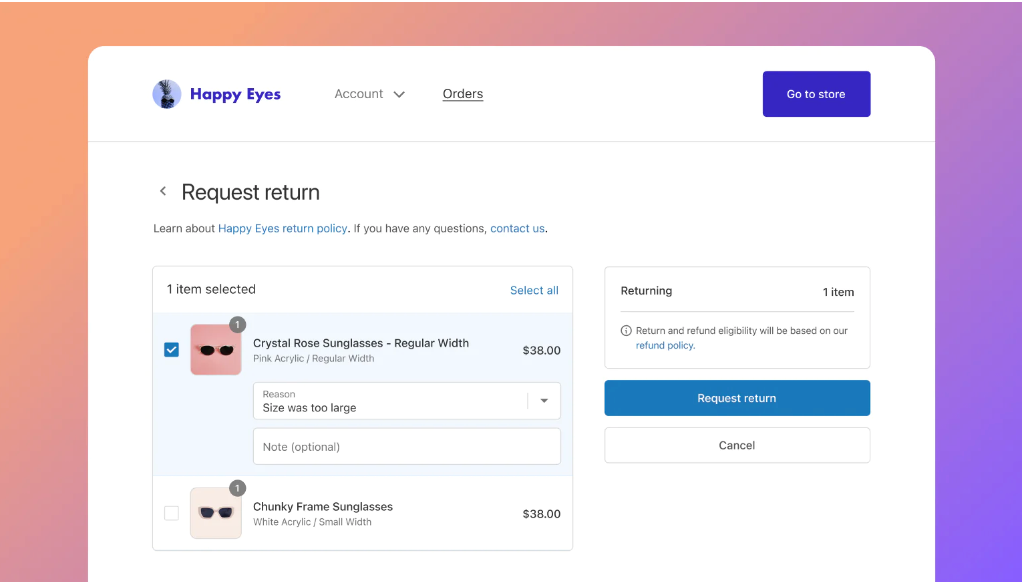

10. Make returns easy

The National Retail Federation reports that the average rate of return was 16.5% in 2022. Returns are often complex and introduce friction to the buyer’s journey, which causes people to leave items behind in their carts.

Having a proper system in place for handling returns in key. “When customers know that they can get their money back just as easily as they can spend it, they’ll shop more confidently and spend more,” says Sanaz Hajizadeh, former director of product management at Happy Returns.

Shopify’s self-serve returns solution makes it easy to handle all returns and refunds in your store.

It’s a free built-in tool you can use to centralize returns, automate notifications, generate shipping labels, and restock inventory. Shopify’s self-serve also lets you give customers full visibility of a return’s status, from beginning to end.

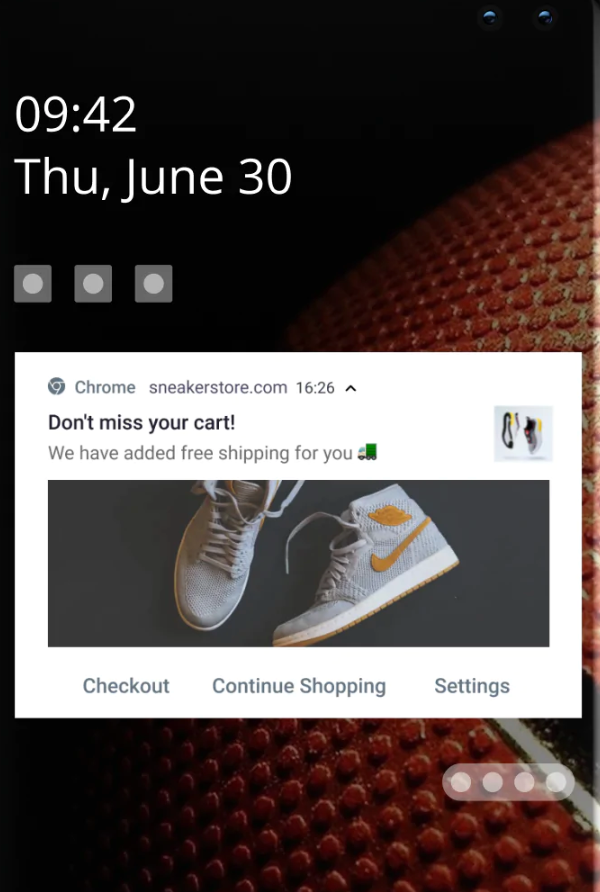

11. Send push notifications

The average click rate of web push notifications is 12%. With push notifications, you can turn anonymous web visitors into subscribers, requiring no personal details like email or phone number. If they abandon cart, you can send a follow-up push notification encouraging them to return and buy.

You can easily set up and manage push notification campaigns with the PushOwl Shopify app. Try it today, free for 14 days. Paid plans start at $19 per month.

Say goodbye to abandoned checkouts in your Shopify store

It’s easy to forget that customers scrutinize every move they make once they reach the checkout. As with success in any area of life, it comes down to tried and true principles.

Every field, every bit of copy, every logo is scrutinized and processed, even if only subconsciously, especially if they’ve never bought from you before.

Understanding this, if you’re proactive in finding ways to reduce fears, increase trust, and reiterate the reasons people decided to buy from you, you just may incentivize more people to move from the checkout to becoming actual customers.

Cart Abandonment FAQ

What is cart abandonment in e-commerce?

Cart abandonment in e-commerce is a term used to describe when a customer adds items to their shopping cart on an online store but fails to complete the checkout process. It is a common issue in e-commerce. It can be caused by a variety of factors, including website usability issues, lack of payment options, pricing, shipping costs, and more.

What is a typical cart abandonment rate for ecommerce?

The average cart abandonment rate for ecommerce is around 68%. However, this rate can vary depending on the type of products being sold, the checkout process, and overall customer experience.

What are the types of abandonment in ecommerce?

Cart Abandonment: This is the most common type of abandonment and occurs when a customer adds items to their online shopping cart but does not complete the purchase.

Search Abandonment: This occurs when a customer visits a store's website but does not complete their search for a particular product.

Checkout Abandonment: This occurs when a customer begins the checkout process but does not complete the purchase.

Account Abandonment: This occurs when a customer creates an account or profile on a store website but does not return to complete the purchase.

Loyalty Program Abandonment: This occurs when a customer signs up for a loyalty program but does not take advantage of the benefits or discounts that come with it.

Read more

- How to Personalize for Unknown Black Friday Cyber Monday Mobile Visitors

- Gift Wrapping in Ecommerce: How to Boost AOV This Holiday Season

- Replatforming SEO Strategies to Protect Rankings, Boost Traffic, and Drive Sales

- How to Design Customer Surveys That Lead to Actionable Insights

- How Ecommerce Teams Get Buy-in To Sell More

- Supply Chain Optimization: How to Develop a World-Class Logistics Network

- What are the retail trends for 2023?

- 12 Checkout Process Optimization Tips to Increase Ecommerce Revenue

- Guest Checkouts: Definition, Benefits, and Best Practices

- One-Page Checkouts: Definition, Benefits & Optimizations